- Blog

- Download torrent resident evil 2

- Adobe acrobat 9 pro serial number - for windows 10

- Import ical to outlook 2013

- Youtube telugu old movies full length

- Free pdf merger pnline

- Apple dvd player normalize audio

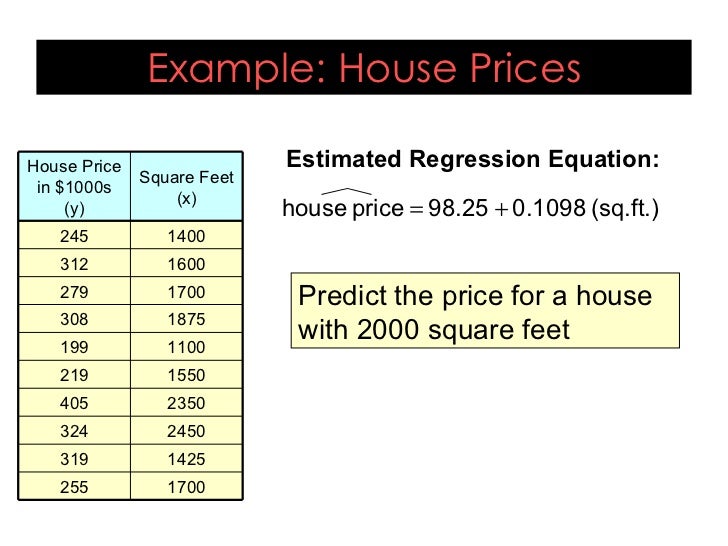

- Linear regression equation example

- Putlocker the secret life of pets full movie

- Screen readers for visually impaired

- Mplab icd 3 usb driver

- Mac os lion installer download

- Hma pro vpn free download for mobile device

- Hp print and scan doctor mac

- Realtek rtl8188ee 802-11bgn driver download free

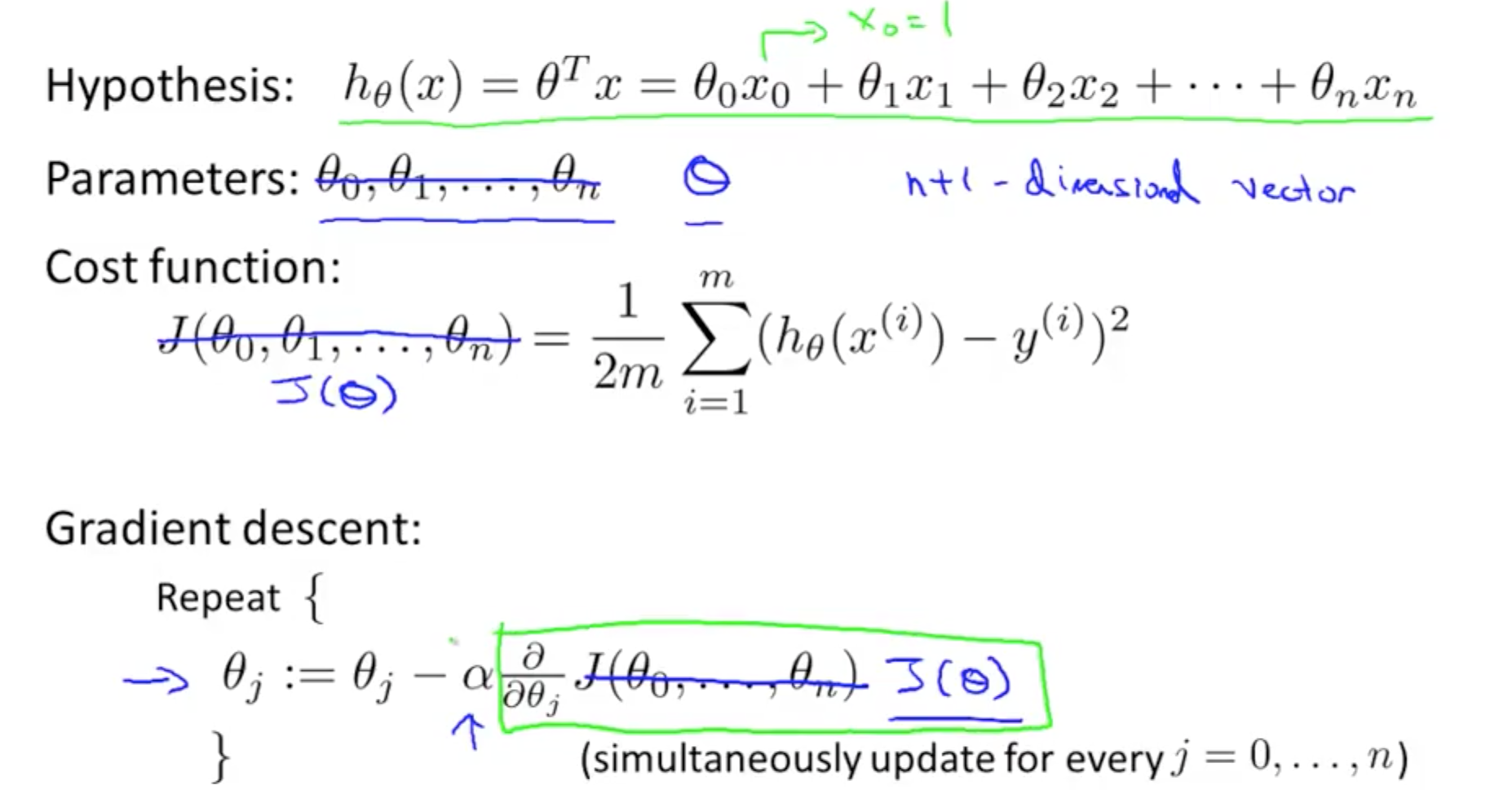

Another subtle difference baked into this is that gradient descent requires us to define a learning rate that controls the size of the steps taken towards the minimum of the loss function. Gradient descent takes an iterative approach which means our parameters are updated gradually until convergence. The most obvious way in which the normal equation differs from gradient descent is that it’s analytical.

This process is repeated until convergence at the minimum of the loss function. Lastly, we take steps proportional to the negative gradient to make a descent to the minimum of the loss function by updating the current set of parameters - see formula below. Next, the partial derivative is calculated from the loss function which is used to reference the slope at its current point. The process starts by first evaluating the model's performance. It’s deployed to iteratively find the parameters theta (θ) that minimize the loss function. Gradient descent is one of the most used machine learning algorithms in machine learning.

Since we’ve already covered how the normal equation works in ‘ What is the Normal Equation?’, in this section, we will briefly touch on gradient descent and then provide ways in which the two techniques differ. While both methods seek to find the parameters theta (θ) that minimize the loss function, the method of approach differs greatly between the two solutions. Θ → The parameters that minimize the loss function X → The input feature values for each instance y → The vector of output values for each instance The Normal Equation vs Gradient Descent Take a look at the formula for the normal equation: Working through the solution to the parameters θ_0 to θ_n using the process described above results in an extremely involved derivation procedure. Following this process and solving for all of the values of θ from θ_0 to θ_n will result in the values of θ that minimize the loss function. Consequently, the partial derivative of the loss function, J, has to be taken with respect to every parameter of θ_j in turn. In the above equation, theta (θ) is a n + 1 dimensional vector, and our loss function is a function of the vector value. Here’s how we can represent our loss function mathematically: The goal of the training process is to find the values of theta (θ) that minimize the loss function. For this purpose, we compute a loss function. To determine if our model has learned well, it’s important we measure the performance of our model on the training data. Given this approximate target function, we can use our model to make predictions. Thus, a more concise way to represent this is to use its vectorized form: Where θ represents the parameters and n is the number of features.Įssentially, all that occurs in the above equation is the dot product of θ, and x is being summed. Mathematically it can be represented as follows:

#LINEAR REGRESSION EQUATION EXAMPLE PLUS#

Linear regression makes a prediction, y_hat, by computing the weighted sum of input features plus a bias term. Both descriptions work, but what exactly do they mean? We will start with linear regression. Another way to describe the normal equation is as a one-step algorithm used to analytically find the coefficients that minimize the loss function. The normal equation is a closed-form solution used to find the value of θ that minimizes the cost function. Let’s dive deeper… What is the Normal Equation? For now, all you need to know is that it's an effective approach that can help you save lots of time when implementing linear regression under certain conditions.

What problem did you ask? We’ll cover that in the remainder of this article.

It’s just another way to solve a problem. The normal equation is just an emphasis of this concept. For example, if we wanted to get from one side of a room to another, we may decide to walk around the room until we arrive at the opposing side, or we could just cut across. Most problems we encounter have several ways they can be solved. The Normal Equation from Scratch in Python.Deciphering When to Use the Normal Equation.The Normal Equation vs Gradient Descent.

- Blog

- Download torrent resident evil 2

- Adobe acrobat 9 pro serial number - for windows 10

- Import ical to outlook 2013

- Youtube telugu old movies full length

- Free pdf merger pnline

- Apple dvd player normalize audio

- Linear regression equation example

- Putlocker the secret life of pets full movie

- Screen readers for visually impaired

- Mplab icd 3 usb driver

- Mac os lion installer download

- Hma pro vpn free download for mobile device

- Hp print and scan doctor mac

- Realtek rtl8188ee 802-11bgn driver download free